On Celery, your deployment's scheduler adds a message to the queue and the Celery broker delivers it to a Celery worker (perhaps one of many) to execute. To optimize for flexibility and availability, the Celery executor works with a "pool" of independent workers and uses messages to delegate tasks. Celery executor Īt its core, the Celery executor is built for horizontal scaling.Ĭelery itself is a way of running python processes in a distributed fashion. Until all resources on the server are used, the Local executor actually scales up quite well. How much that executor can handle fully depends on your machine's resources and configuration. Heavy Airflow users whose DAGs run in production will find themselves migrating from Local executor to Celery executor after some time, but you'll find plenty of use cases out there written by folks that run quite a bit on Local executor before making the switch. The obvious risk is that if something happens to your machine, your tasks will see a standstill until that machine is back up. It's dependent on a single point of failure.It's straightforward and easy to set up.For example, you might hear: "Our organization is running the Local executor for development on a t3.xlarge AWS EC2 instance." Pros In practice, this means that you don't need resources outside of that machine to run a DAG or a set of DAGs (even heavy workloads). A single LocalWorker picks up and runs jobs as they’re scheduled and is fully responsible for all task execution. The Local executor completes tasks in parallel that run on a single machine (think: your laptop, an EC2 instance, etc.) - the same machine that houses the Scheduler and all code necessary to execute.

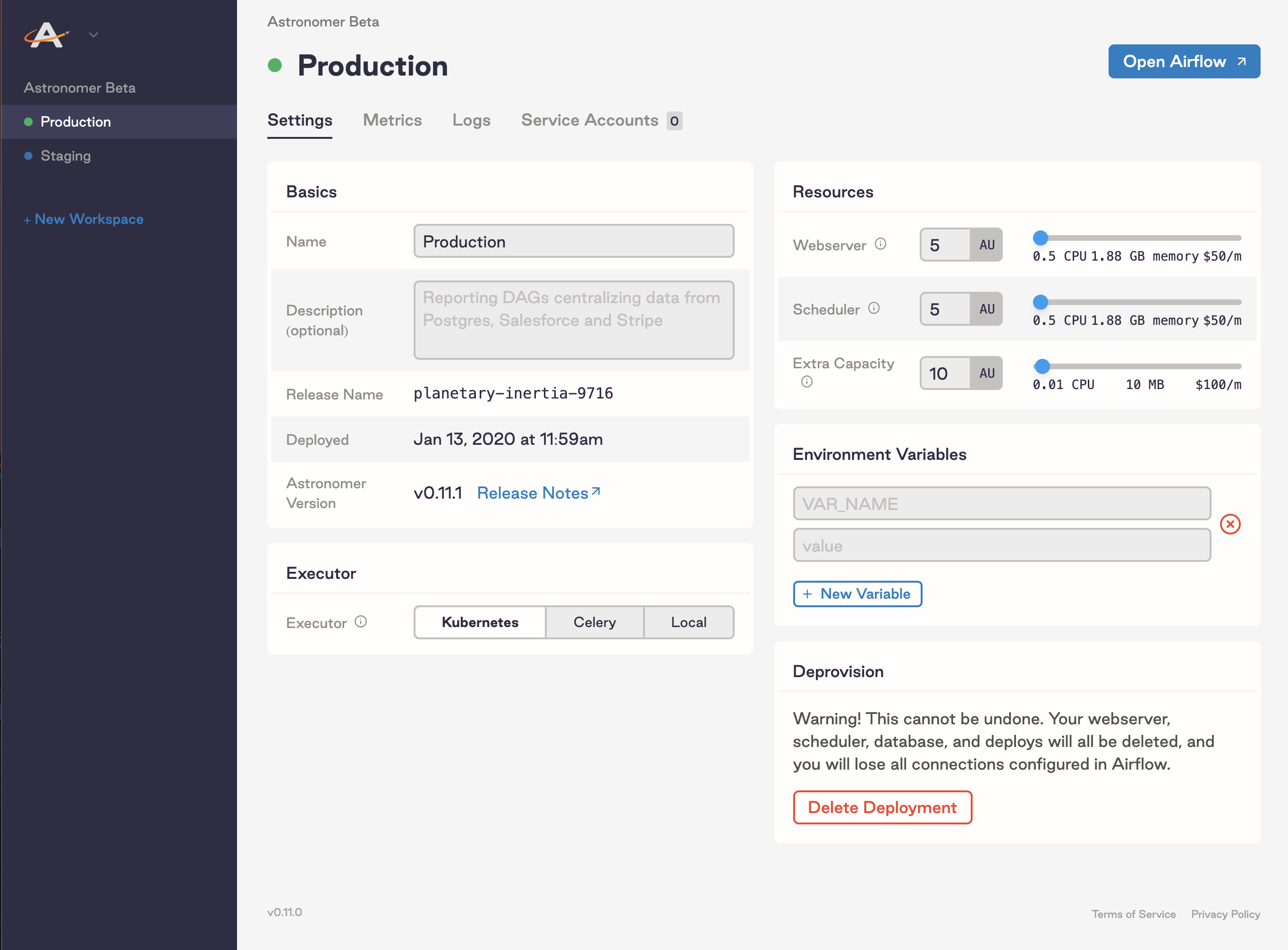

Running Apache Airflow on a Local executor exemplifies single-node architecture. Rather than trying to find one set of configurations that work for all jobs, Astronomer recommends grouping jobs by their type as much as you can. If you have 1 worker and want it to match your deployment's capacity, worker_concurrency = parallelism. Think of it as "How many tasks each of my workers can take on at any given time." This number will naturally be limited by dag_concurrency. The CeleryExecutor for example, will by default run a max of 16 tasks concurrently. Worker_concurrency: Determines how many tasks each worker can run at any given time. Think of this as "maximum tasks that can be scheduled at once, per DAG." You might see: ENV AIRFLOW_CORE_DAG_CONCURRENCY=16 Think of this as "maximum active tasks anywhere." ENV AIRFLOW_CORE_PARALLELISM=18ĭag_concurrency: Determines how many task instances your scheduler is able to schedule at once per DAG. Parallelism: Determines how many task instances can be actively running in parallel across DAGs given the resources available at any given time at the deployment level. They're defined in your airflow.cfg (or directly through the Astro UI) and encompass everything from email alerts to DAG concurrency (see below). When you're learning about task execution, you'll want to be familiar with these somewhat confusing terms, all of which are called "environment variables." The terms themselves have changed a bit over Airflow versions, but this list is compatible with 1.10+.Įnvironment variables: A set of configurable values that allow you to dynamically fine tune your Airflow deployment. The difference between Executors comes down to the resources they have at hand and how they choose to utilize those resources to distribute work (or not distribute it at all). The executor works closely with the scheduler to determine what resources will actually complete those tasks (using a worker process or otherwise) as they're queued. The scheduler reads from the metadata database to check on the status of each task and decide what needs to get done and when. The Metadata Database keeps a record of all tasks within a DAG and their corresponding status ( queued, scheduled, running, success, failed, and so on) behind the scenes. See Introduction to Apache Airflow.Īfter a DAG is defined, the following needs to happen in order for the tasks within that DAG to execute and be completed: To get the most out of this guide, you should have an understanding of: Understand the purpose of the three most popular Executors: Local, Celery, and Kubernetes.Contextualize Executors with general Airflow fundamentals.Understand the core function of an executor.Even if you're a veteran user overseeing 20 or more DAGs, knowing what Executor best suits your use case at any given time isn't always easy - especially as the OSS project (and its utilities) continues to grow and develop. If you're new to Apache Airflow, the world of Executors is difficult to navigate.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed